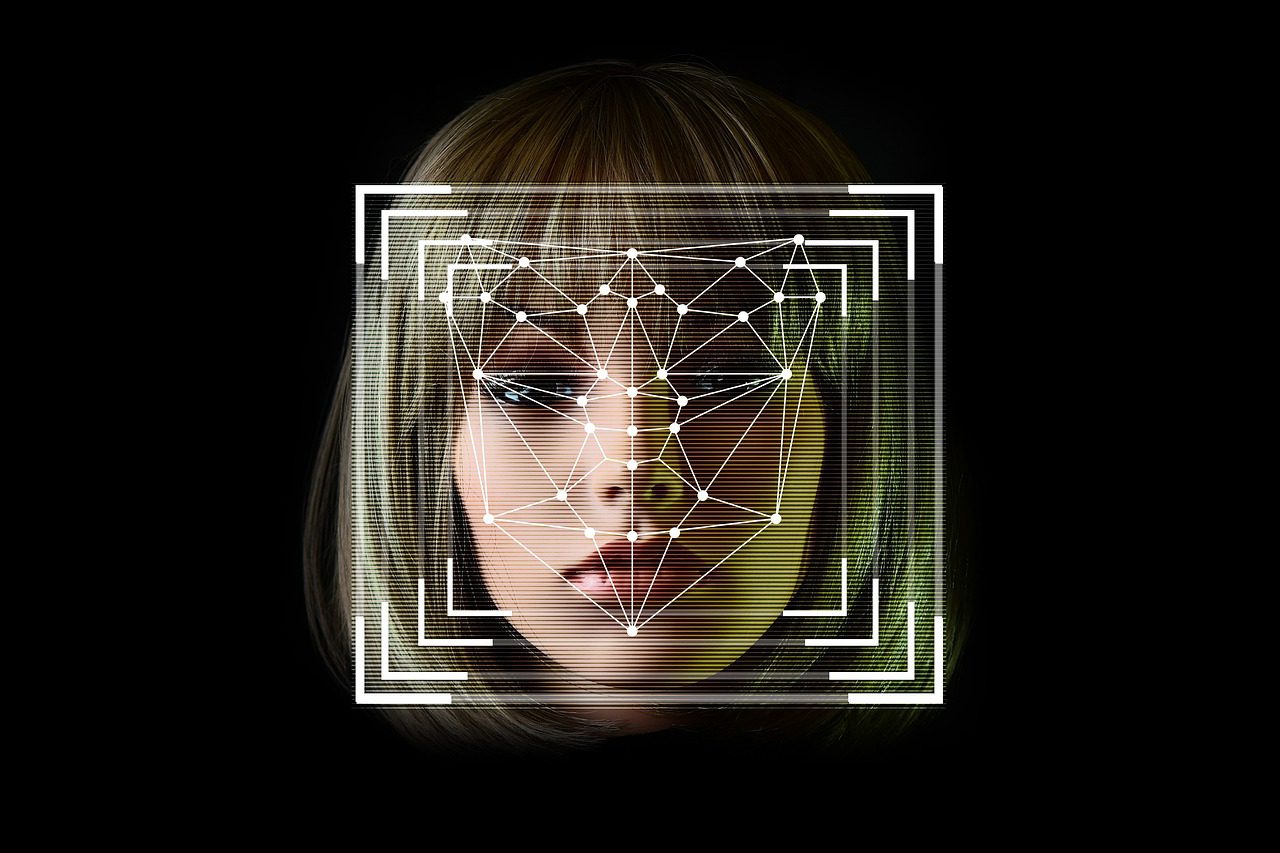

Several AI-powered face analysis tools, including one that promises to discern a subject’s mood from videos and images, are being phased out of Microsoft’s public access as of today.

Experts have slammed the use of emotion recognition software. But they argue that it is incorrect to correlate exterior manifestations of emotion with inside sentiments because of the wide range of differences amongst groups.

Microsoft’s AI ethical principles have been overhauled as part of this move. A new version of the company’s Responsible AI Standards places an emphasis on responsibility and human supervision when it comes to determining who utilizes its services.

This implies that Microsoft will restrict access to certain parts of its face recognition services and completely delete others. Those who want to make use of Azure Face’s face recognition capabilities must submit an application and provide detailed information about their intended usage of the system to Microsoft. There will be certain exceptions to this rule, such as the ability to automatically blur faces in photos and videos.

Experts inside and outside the company have highlighted the lack of scientific consensus on the definition of ‘emotions,’ the challenges in how inferences generalize across use cases, regions, and demographics, and the heightened privacy concerns around this type of capability.

Microsoft is also discontinuing Azure Face’s ability to recognize traits such as gender, age, smiling, beards, hair, and cosmetics, in addition to restricting public access to its emotion identification tool. Microsoft has announced that it will no longer be delivering these capabilities to new customers starting today, June 21st, and that current users will lose access to them on June 30th, 2023.

Microsoft, on the other hand, will continue to employ these characteristics in at least one app called Seeing AI which uses machine vision to explain the environment for those who are visually impaired.

Leave a Reply